Towards Semantic Fast-Forward and Stabilized Egocentric Videos

First International Workshop on Egocentric Perception, Interaction and Computing at European Conference on Computer Vision (EPIC@ECCV) 2016

Abstract

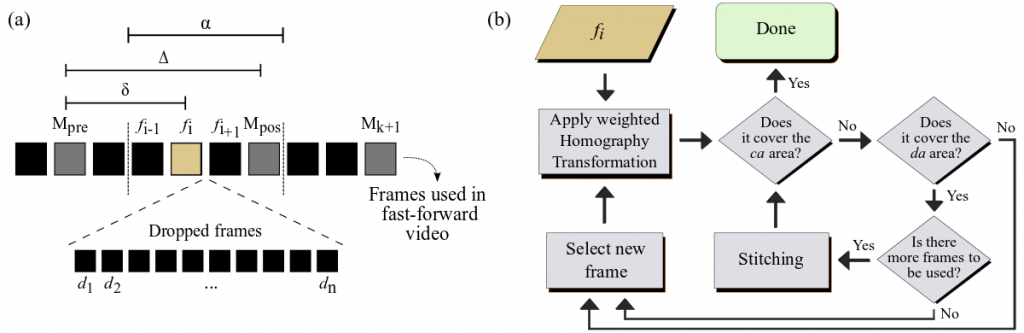

The emergence of low-cost personal mobiles devices and wearable cameras and the increasing storage capacity of video-sharing websites have pushed forward a growing interest towards first-person videos. Since most of the recorded videos compose long-running streams with unedited content, they are tedious and unpleasant to watch. The fast-forward state-of-the-art methods are facing challenges of balancing the smoothness of the video and the emphasis in the relevant frames given a speed-up rate. In this work, we present a methodology capable of summarizing and stabilizing egocentric videos by extracting the semantic information from the frames. This paper also describes a dataset collection with several semantically labeled videos and introduces a new smoothness evaluation metric for egocentric videos that is used to test our method.

Keywords: Semantic Information, First-person Video, Fast-Forward, Egocentric Stabilization

Methodology and Results |

Citation

@InBook{Silva2016,

Title = {Towards Semantic Fast-Forward and Stabilized Egocentric Videos},

Booktitle = {International Workshop on Egocentric Perception, Interaction and Computing (EPIC) at European Conference on Computer Vsision (ECCV)},

Author = {Silva, Michel Melo and Ramos, Washington Luis Souza and Ferreira, Joao Pedro Klock and Campos, Mario Fernando Montenegro and

Nascimento, Erickson Rangel},

Year = {2016},

Address = {Amsterdam, NL},

month = {Oct.},

Pages = {557–571},

Doi = {10.1007/978-3-319-46604-0_40},

ISBN = {978-3-319-46604-0}

}

Title = {Towards Semantic Fast-Forward and Stabilized Egocentric Videos},

Booktitle = {International Workshop on Egocentric Perception, Interaction and Computing (EPIC) at European Conference on Computer Vsision (ECCV)},

Author = {Silva, Michel Melo and Ramos, Washington Luis Souza and Ferreira, Joao Pedro Klock and Campos, Mario Fernando Montenegro and

Nascimento, Erickson Rangel},

Year = {2016},

Address = {Amsterdam, NL},

month = {Oct.},

Pages = {557–571},

Doi = {10.1007/978-3-319-46604-0_40},

ISBN = {978-3-319-46604-0}

}

Baselines

We compare this proposed methodology against the following methods:

- EgoSampling – Poleg et al., Egosampling: Fast-forward and stereo for egocentric videos, CVPR 2015.

- Microsoft Hyperlapse – Joshi et al., Real-time hyperlapse creation via optimal frame selection, ACM. Trans. Graph. 2015.

- Fast-Forward based on Semantic Extraction – Ramos et al., Fast-forward video based on semantic extraction, ICIP 2016.

Datasets

We conducted the experimental evaluation using the datasets:

- Semantic dataset – Silva et al., Towards Semantic Fast-Forward and Stabilized Egocentric Videos, EPIC 2016.

Authors

Michel Melo da Silva

Researcher

Washington Luis de Souza Ramos

PhD Candidate

João Pedro Klock Ferreira

Undergraduate Student

Mario F. M. Campos

Professor